Overview

51/51

Variable Coverage

All ACE atmosphere variables found

1/2

Checks Passed

1 components with issues

1.34x

Storage Ratio

FME 2.11 GB vs Legacy 1.57 GB

N/A

Timing Overhead

No timing data available

Component Summary

| Component | Status | Issues |

|---|---|---|

| EAM | PASS | 0 |

| Cross-Verify | FAIL | 6 |

Show all 6 issues

| Component | Issue |

|---|---|

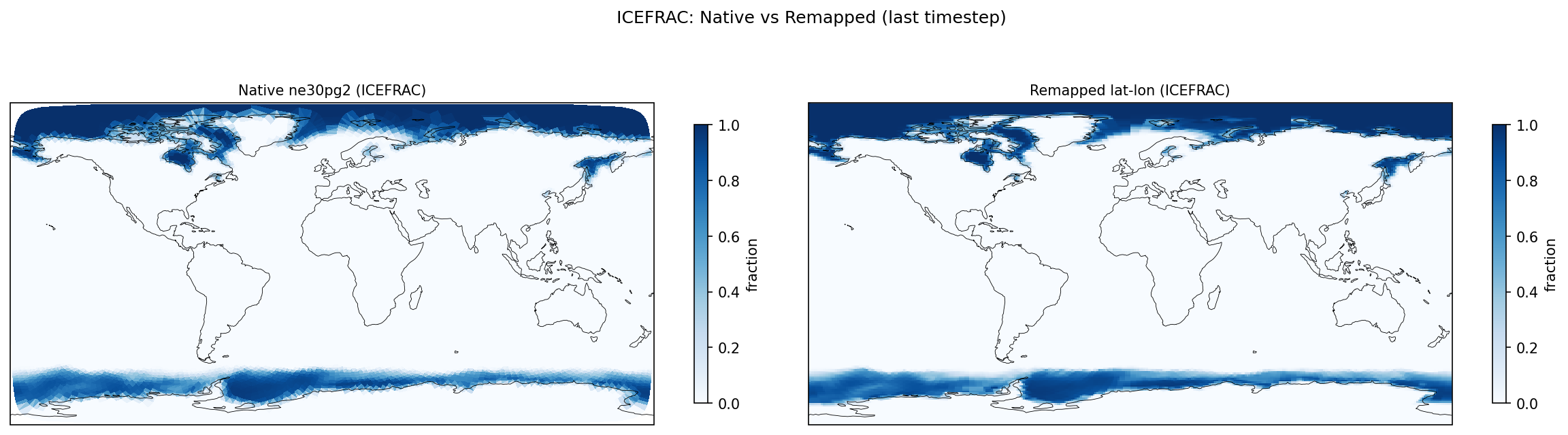

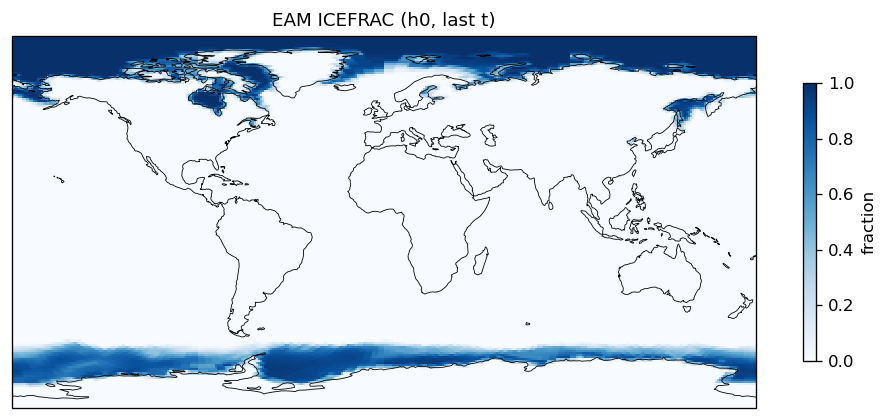

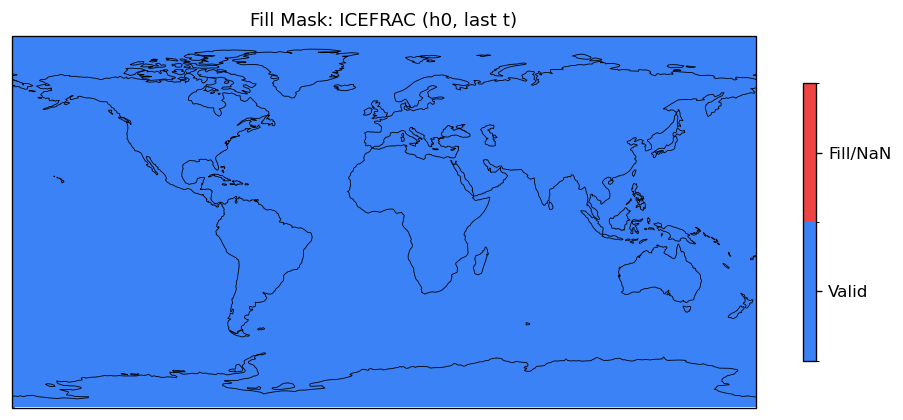

| Cross-Verify | GMEAN ICEFRAC: rel_diff = 0.0966 |

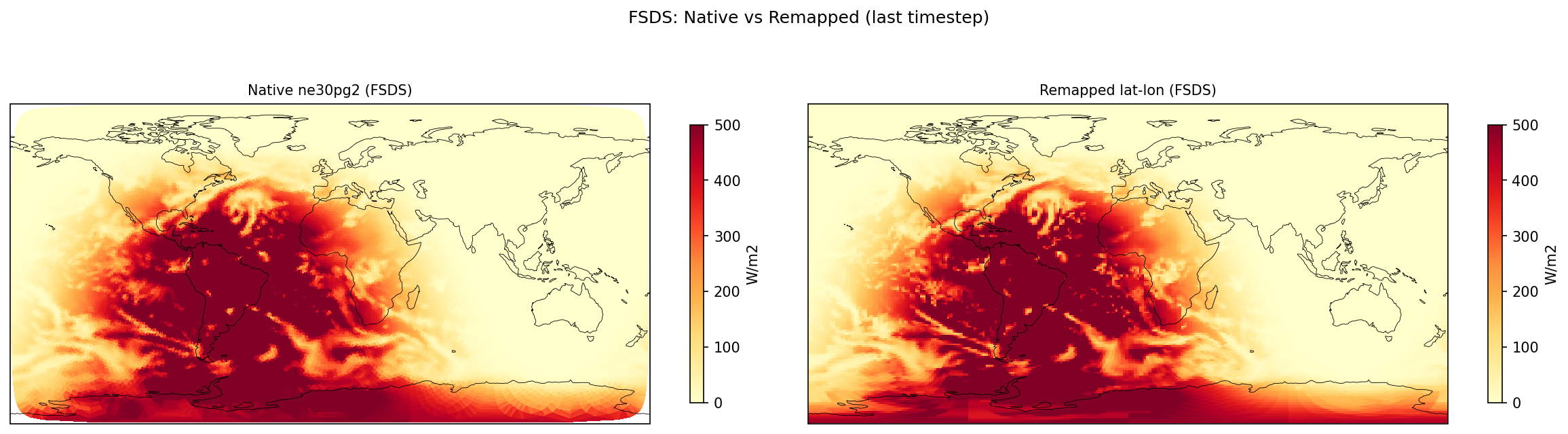

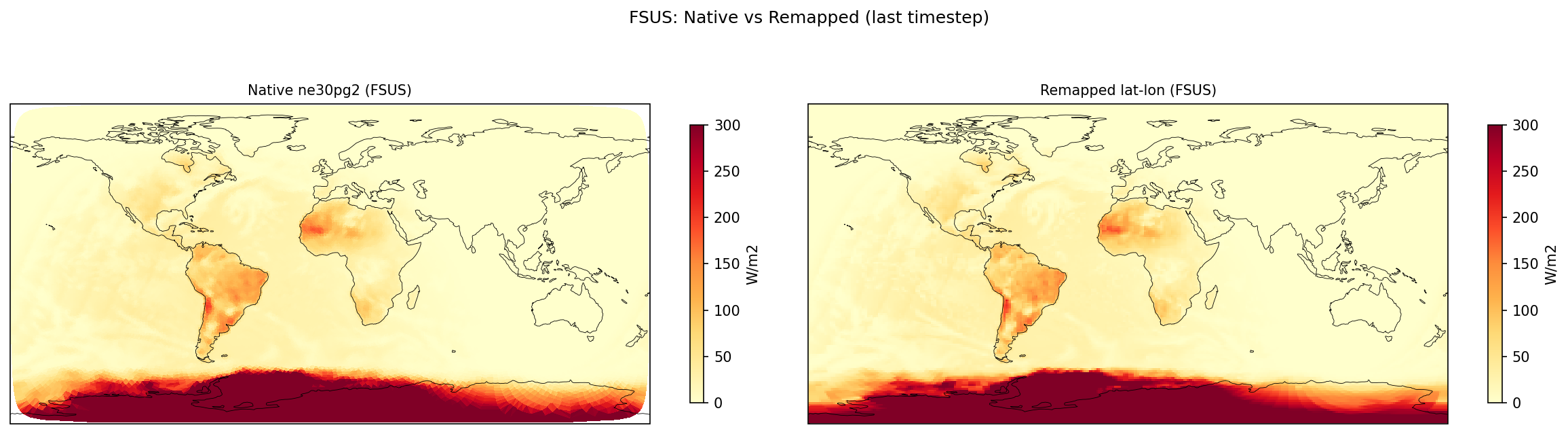

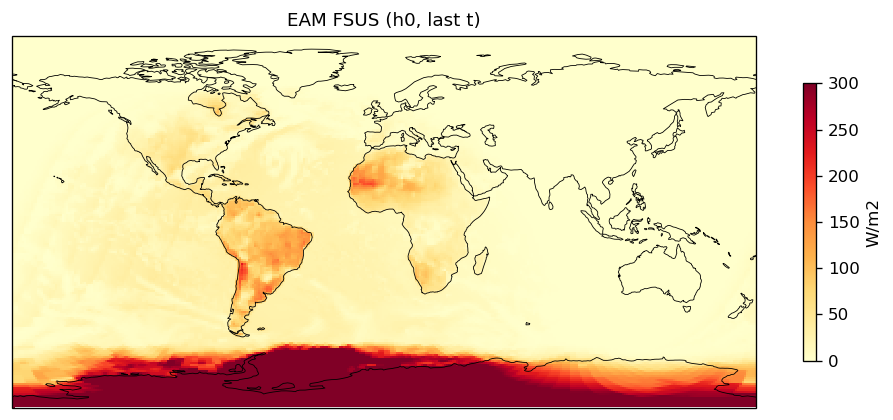

| Cross-Verify | GMEAN FSUS: rel_diff = 0.05457 |

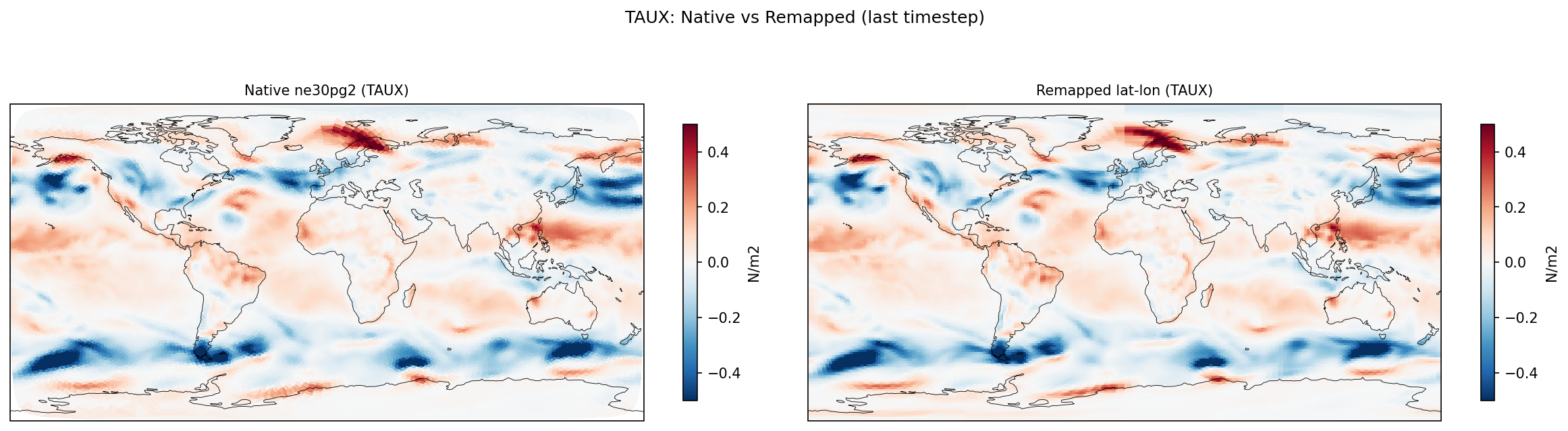

| Cross-Verify | GMEAN TAUX: rel_diff = 0.3693 |

| Cross-Verify | GMEAN TAUY: rel_diff = 0.02138 |

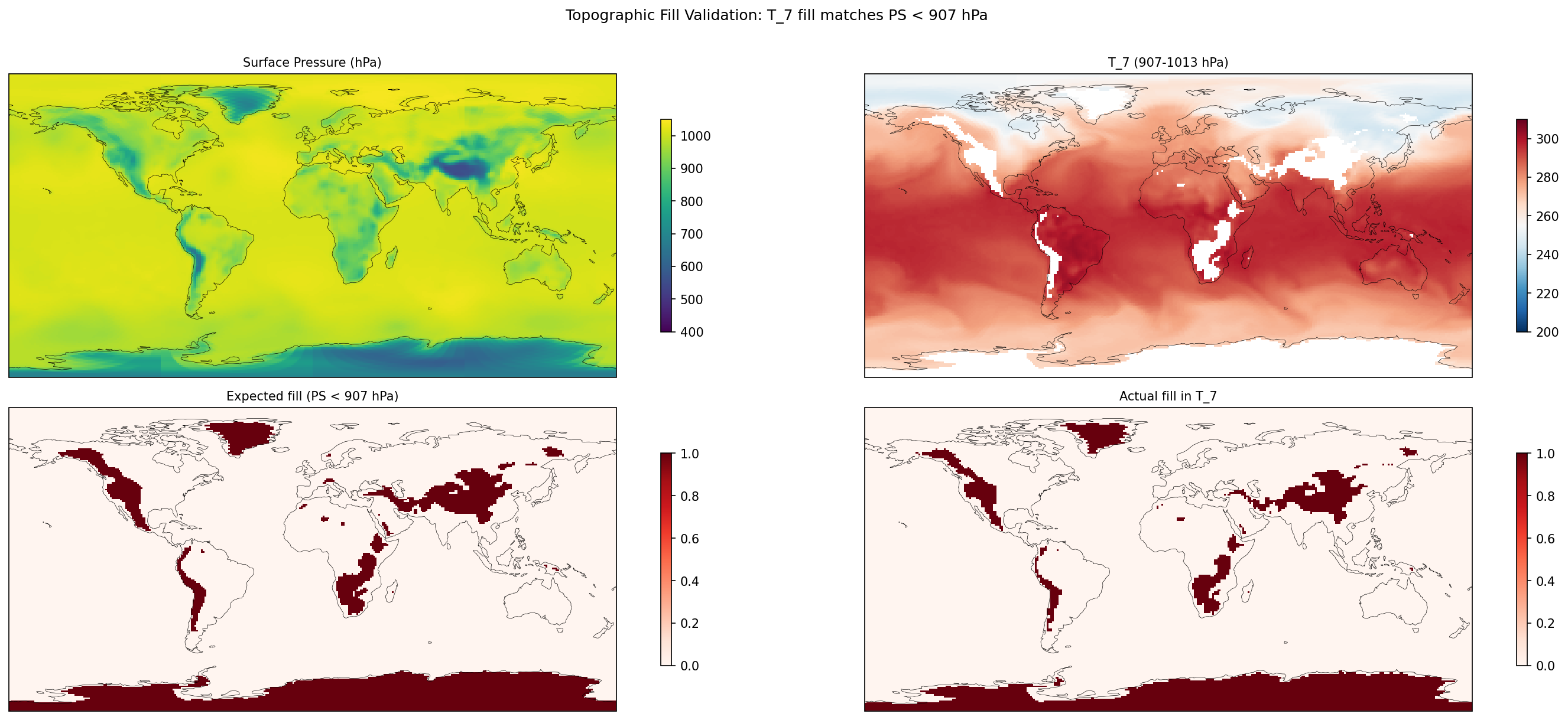

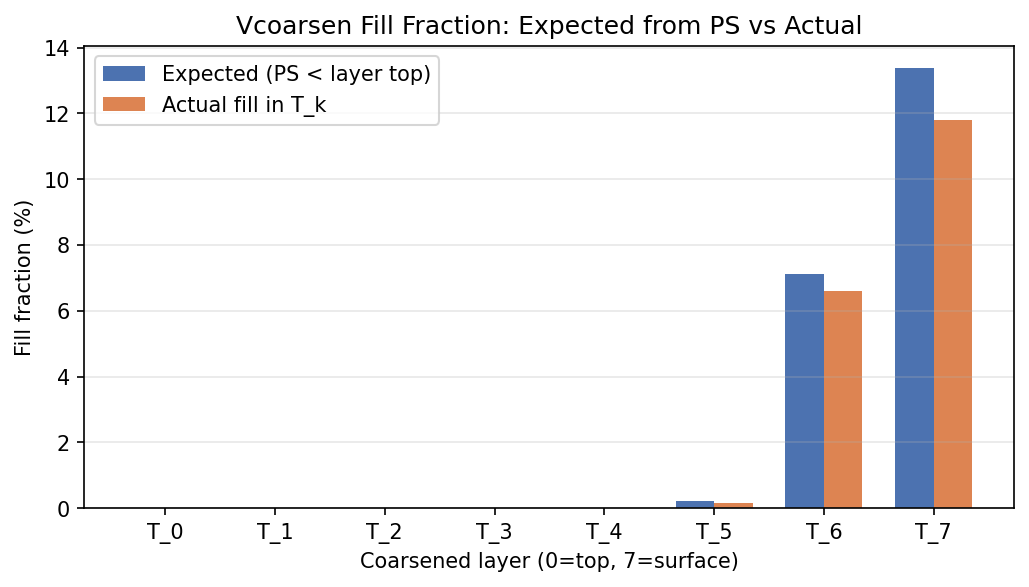

| Cross-Verify | TOPO_FILL: max fill mismatch 1.590% |

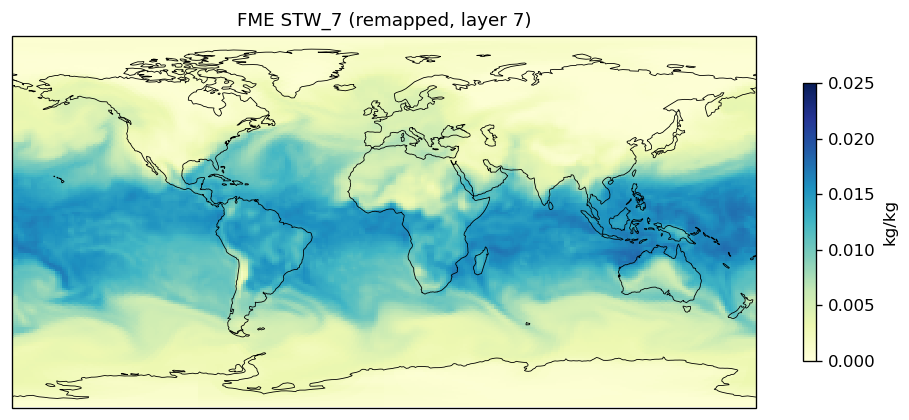

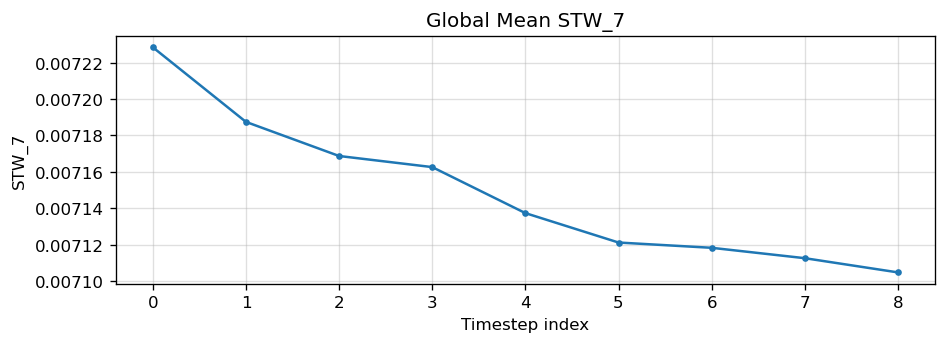

| Cross-Verify | LINEARITY STW: max|rel| = 2.46e-01 |

ACE Atmosphere Variable Requirements

51/51 variables found all present

YAML Variable Specification (ACE canonical names)

## ATMOSPHERE next_step_forcing_names: - SOLIN in_names: - land_fraction - ocean_fraction - sea_ice_fraction - PHIS - SOLIN - PS - TS - T_0 - T_1 - T_2 - T_3 - T_4 - T_5 - T_6 - T_7 - specific_total_water_0 - specific_total_water_1 - specific_total_water_2 - specific_total_water_3 - specific_total_water_4 - specific_total_water_5 - specific_total_water_6 - specific_total_water_7 - U_0 - U_1 - U_2 - U_3 - U_4 - U_5 - U_6 - U_7 - V_0 - V_1 - V_2 - V_3 - V_4 - V_5 - V_6 - V_7 out_names: - PS - TS - T_0 - T_1 - T_2 - T_3 - T_4 - T_5 - T_6 - T_7 - specific_total_water_0 - specific_total_water_1 - specific_total_water_2 - specific_total_water_3 - specific_total_water_4 - specific_total_water_5 - specific_total_water_6 - specific_total_water_7 - U_0 - U_1 - U_2 - U_3 - U_4 - U_5 - U_6 - U_7 - V_0 - V_1 - V_2 - V_3 - V_4 - V_5 - V_6 - V_7 - LHFLX - SHFLX - surface_precipitation_rate - surface_upward_longwave_flux - FLUT - FLDS - FSDS - surface_upward_shortwave_flux - top_of_atmos_upward_shortwave_flux - tendency_of_total_water_path_due_to_advection - TAUX - TAUY

Variable Coverage

| ACE Name | EAM Name | Category | Status |

|---|---|---|---|

SOLIN | SOLIN | forcing, in | FOUND |

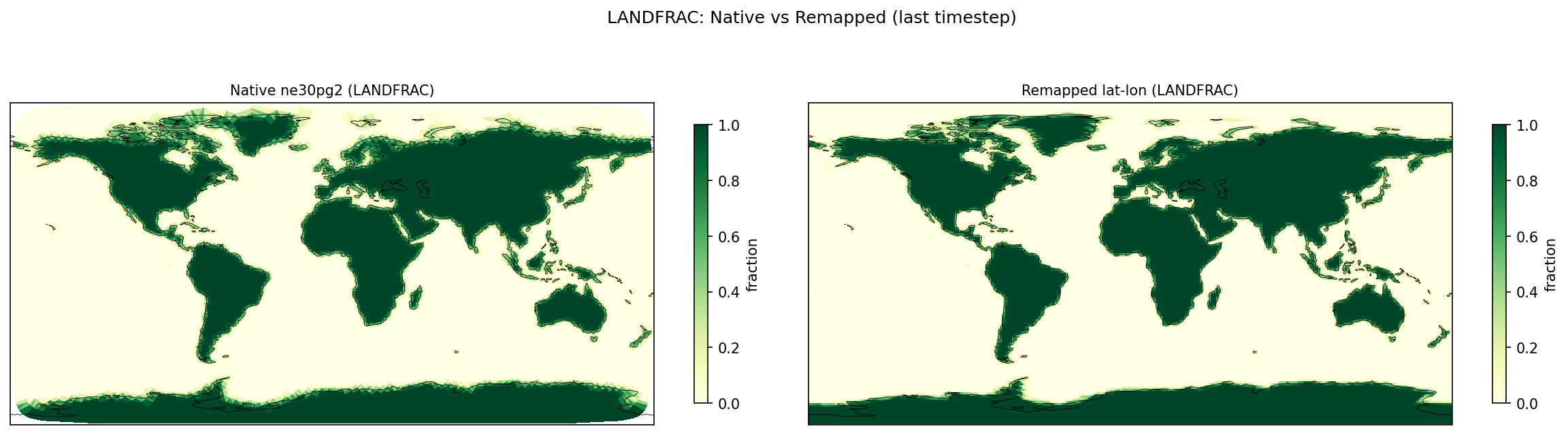

land_fraction | LANDFRAC | in | FOUND |

ocean_fraction | OCNFRAC | in | FOUND |

sea_ice_fraction | ICEFRAC | in | FOUND |

PHIS | PHIS | in | FOUND |

PS | PS | in, out | FOUND |

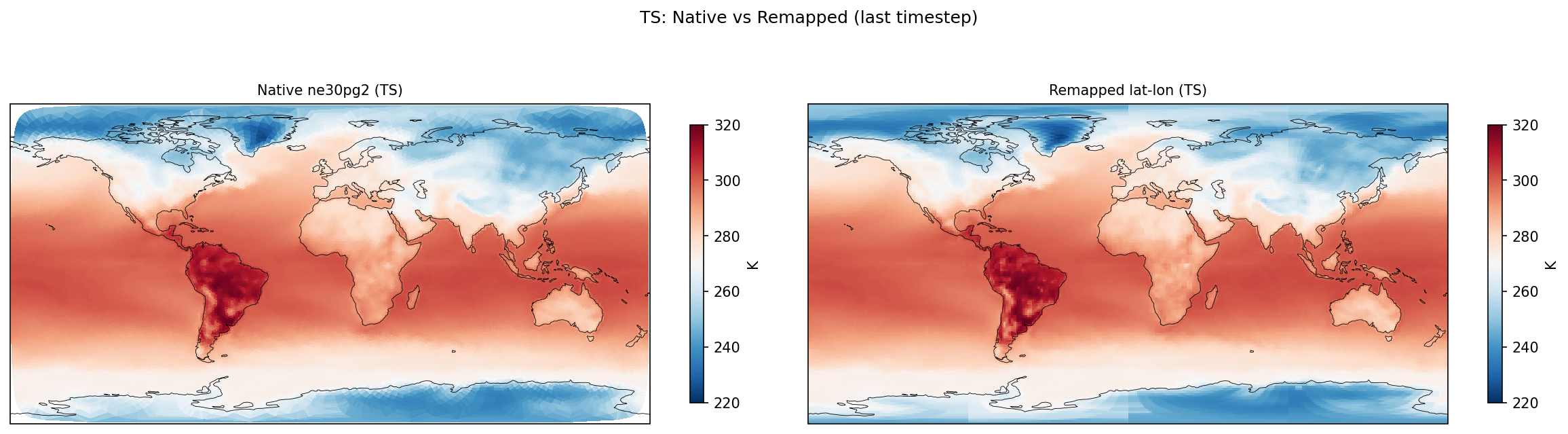

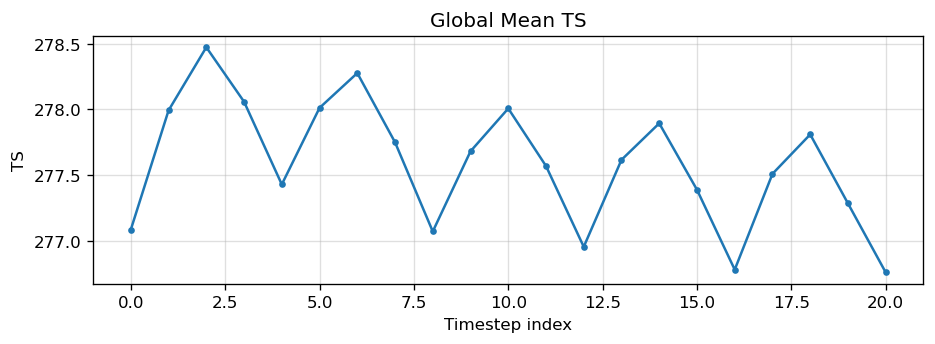

TS | TS | in, out | FOUND |

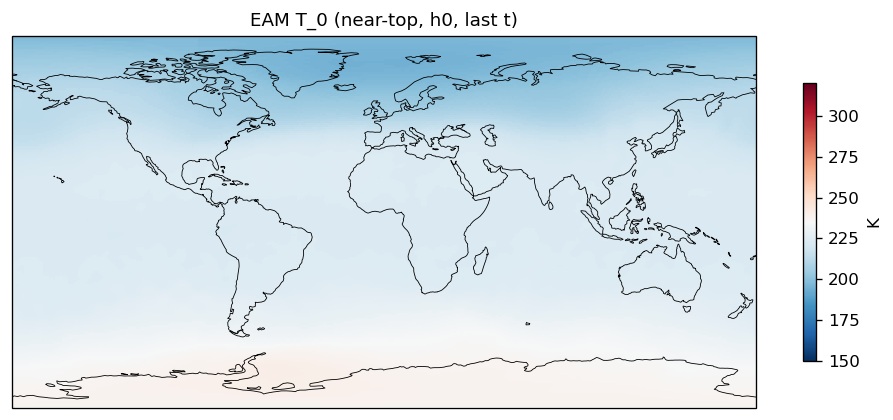

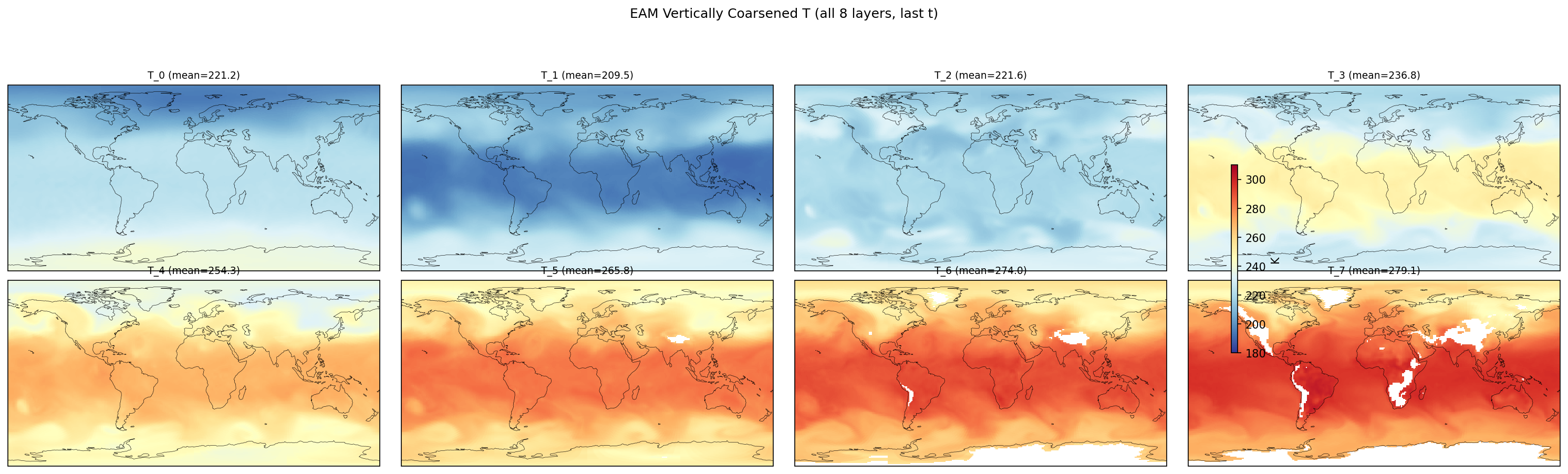

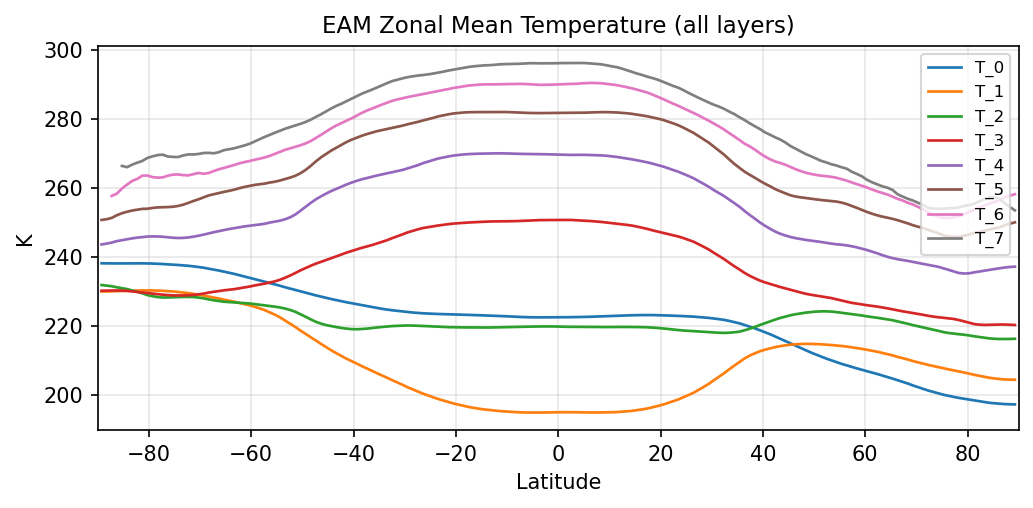

T_0 | T_0 | in, out | FOUND |

T_1 | T_1 | in, out | FOUND |

T_2 | T_2 | in, out | FOUND |

T_3 | T_3 | in, out | FOUND |

T_4 | T_4 | in, out | FOUND |

T_5 | T_5 | in, out | FOUND |

T_6 | T_6 | in, out | FOUND |

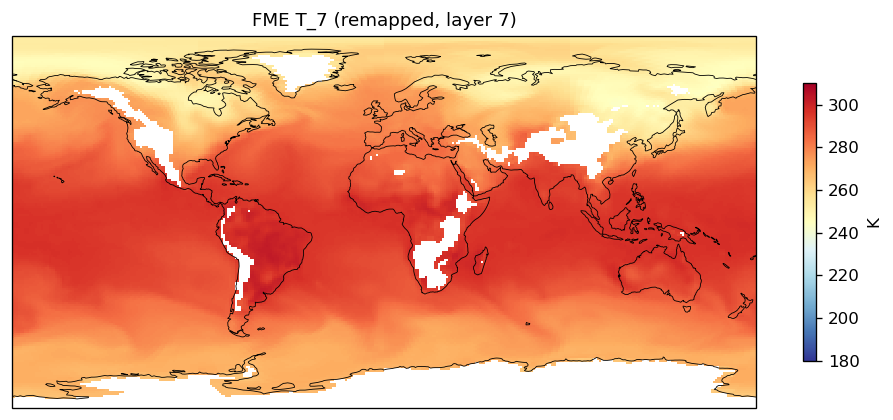

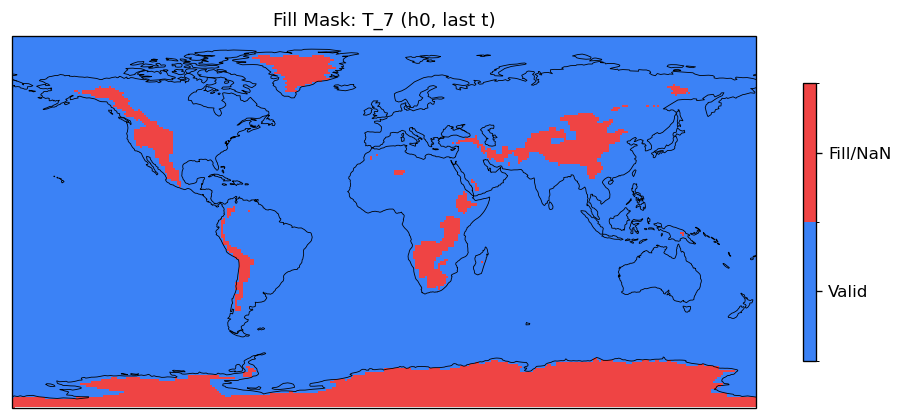

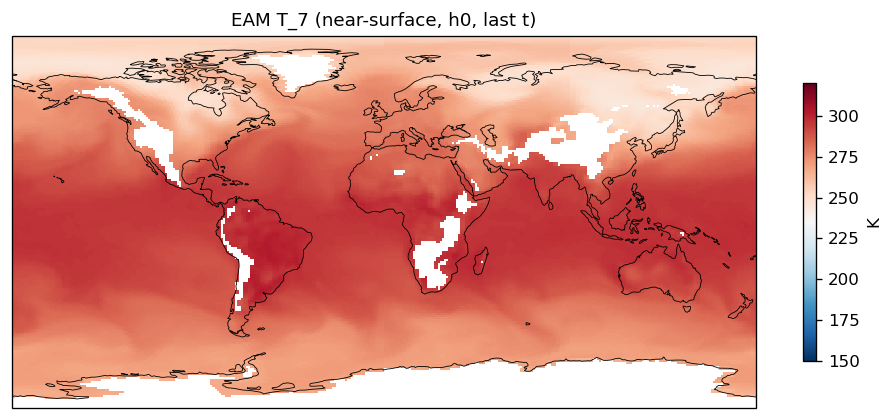

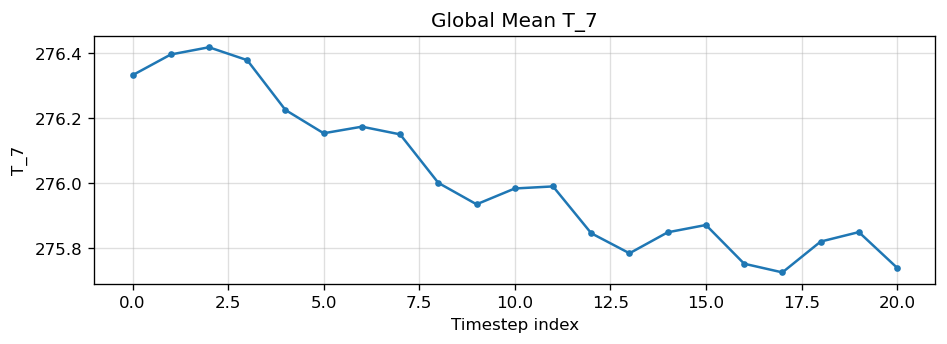

T_7 | T_7 | in, out | FOUND |

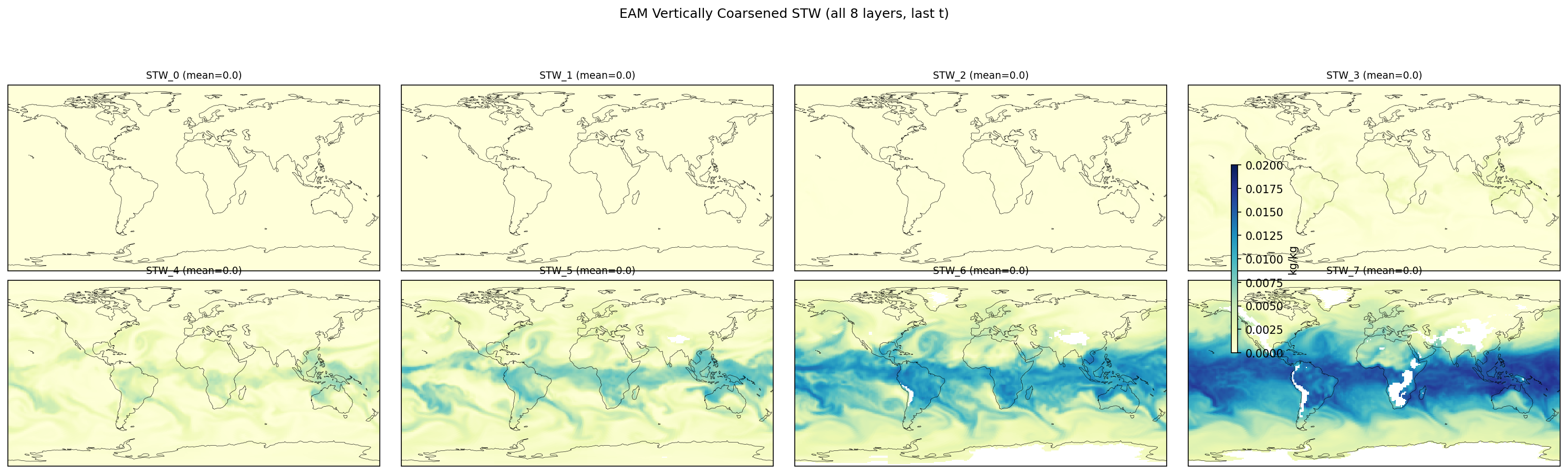

specific_total_water_0 | STW_0 | in, out | FOUND |

specific_total_water_1 | STW_1 | in, out | FOUND |

specific_total_water_2 | STW_2 | in, out | FOUND |

specific_total_water_3 | STW_3 | in, out | FOUND |

specific_total_water_4 | STW_4 | in, out | FOUND |

specific_total_water_5 | STW_5 | in, out | FOUND |

specific_total_water_6 | STW_6 | in, out | FOUND |

specific_total_water_7 | STW_7 | in, out | FOUND |

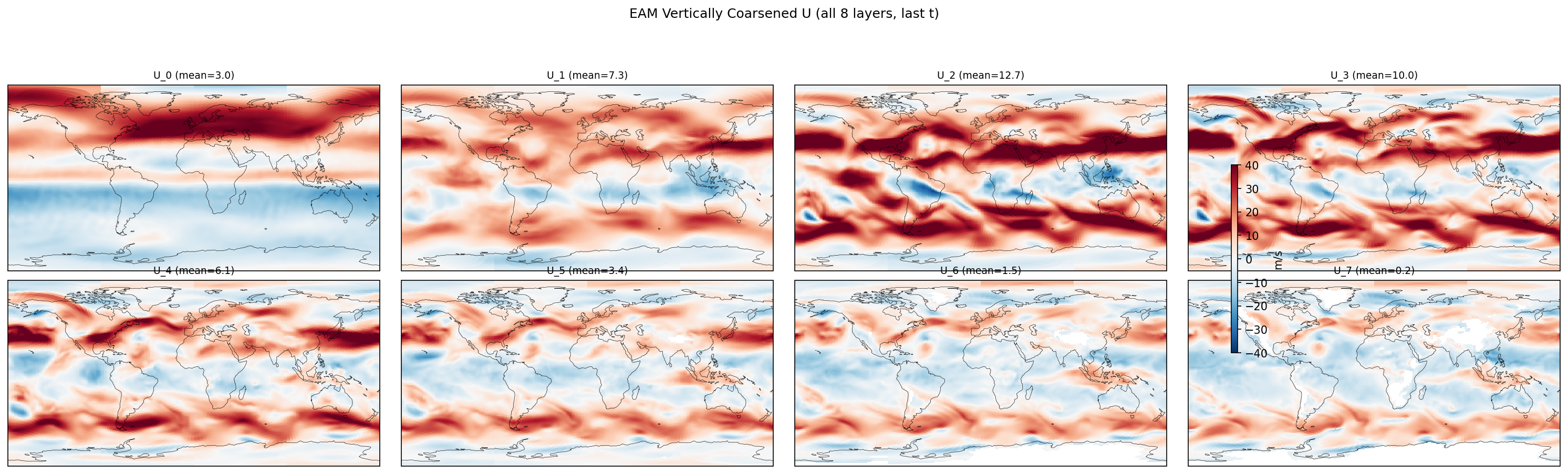

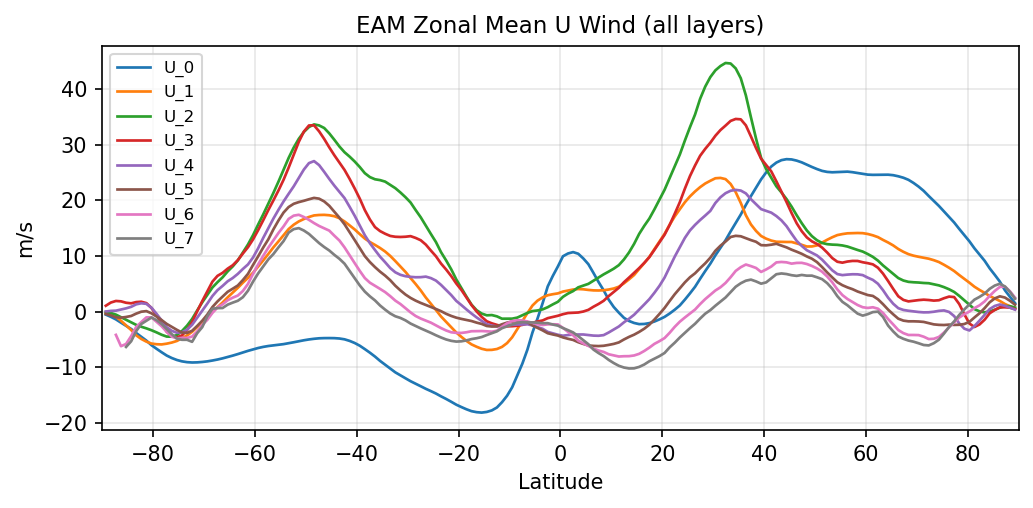

U_0 | U_0 | in, out | FOUND |

U_1 | U_1 | in, out | FOUND |

U_2 | U_2 | in, out | FOUND |

U_3 | U_3 | in, out | FOUND |

U_4 | U_4 | in, out | FOUND |

U_5 | U_5 | in, out | FOUND |

U_6 | U_6 | in, out | FOUND |

U_7 | U_7 | in, out | FOUND |

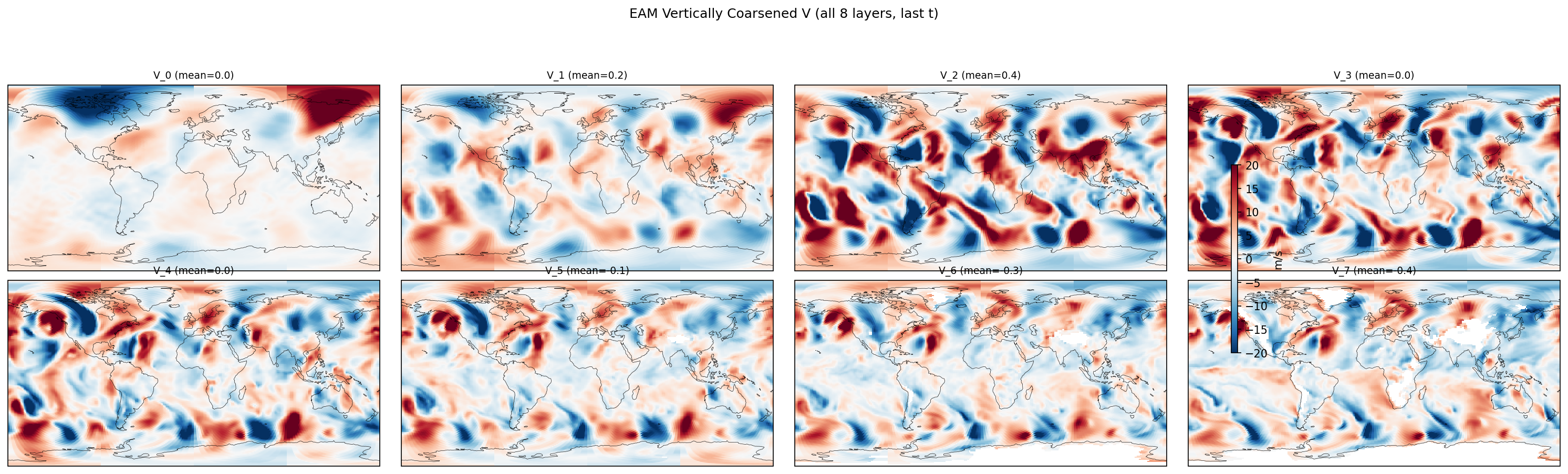

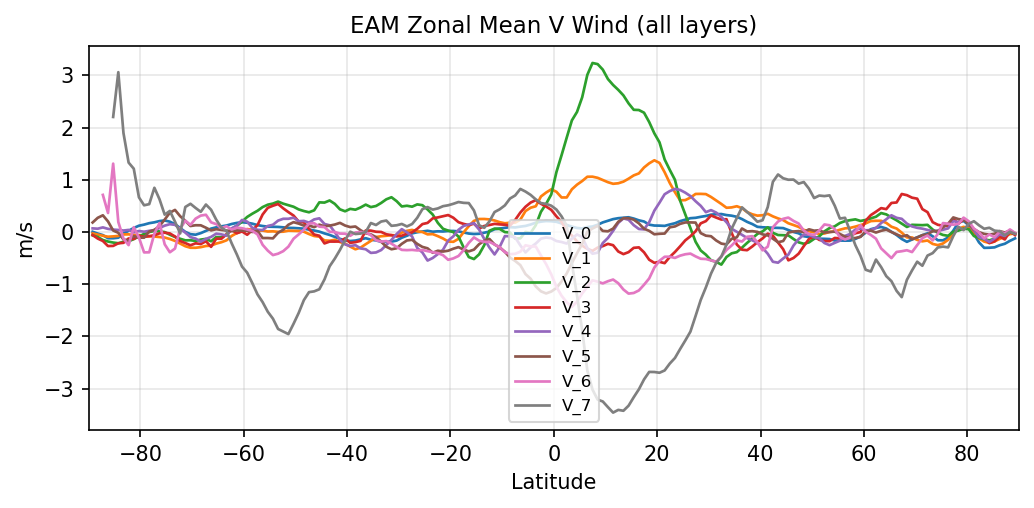

V_0 | V_0 | in, out | FOUND |

V_1 | V_1 | in, out | FOUND |

V_2 | V_2 | in, out | FOUND |

V_3 | V_3 | in, out | FOUND |

V_4 | V_4 | in, out | FOUND |

V_5 | V_5 | in, out | FOUND |

V_6 | V_6 | in, out | FOUND |

V_7 | V_7 | in, out | FOUND |

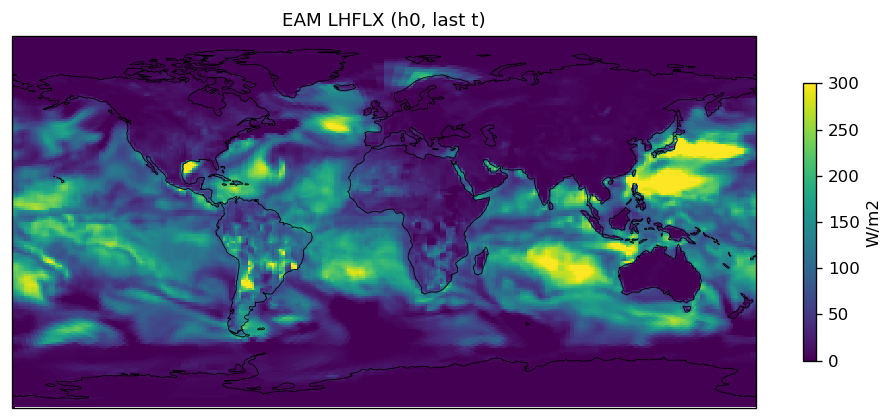

LHFLX | LHFLX | out | FOUND |

SHFLX | SHFLX | out | FOUND |

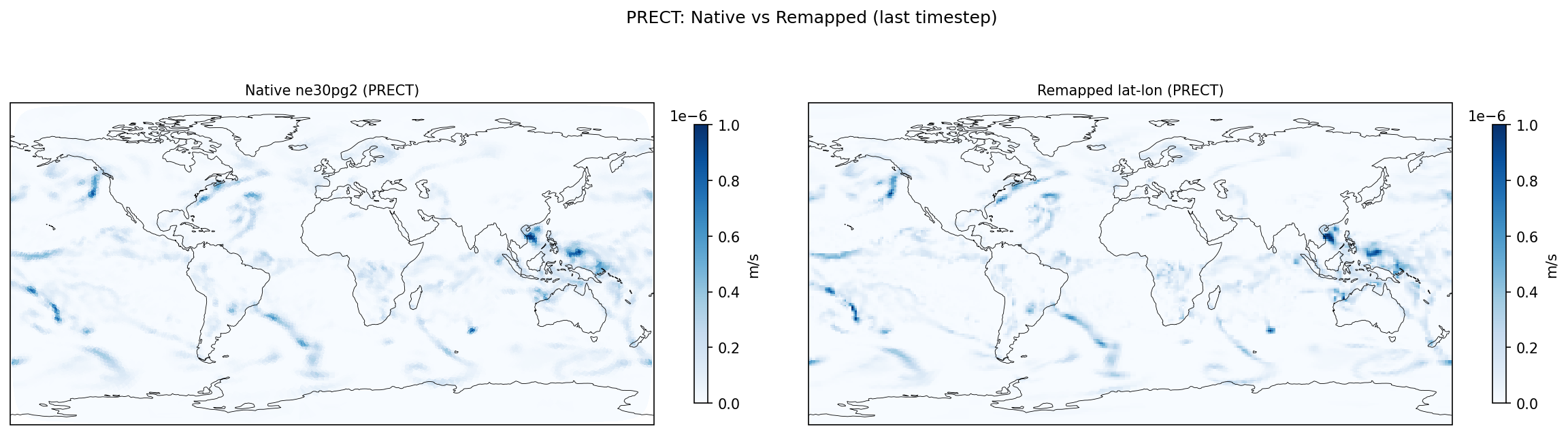

surface_precipitation_rate | PRECT | out | FOUND |

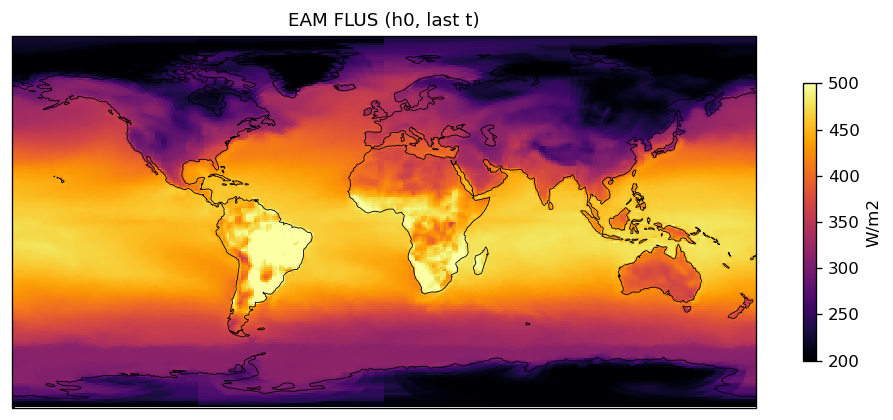

surface_upward_longwave_flux | FLUS | out | FOUND |

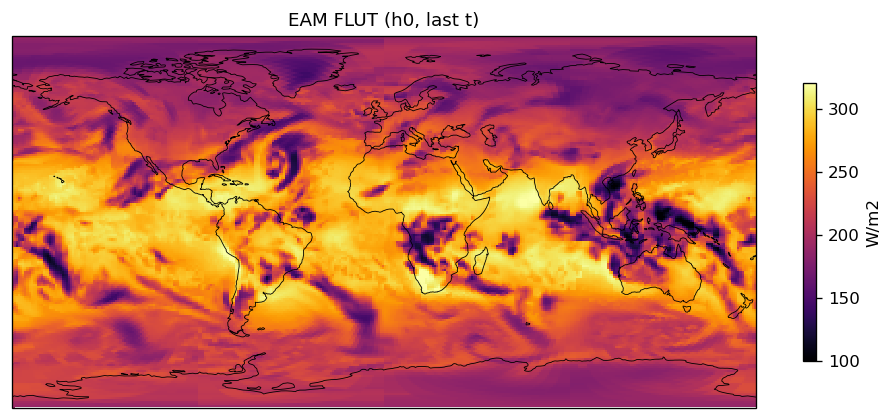

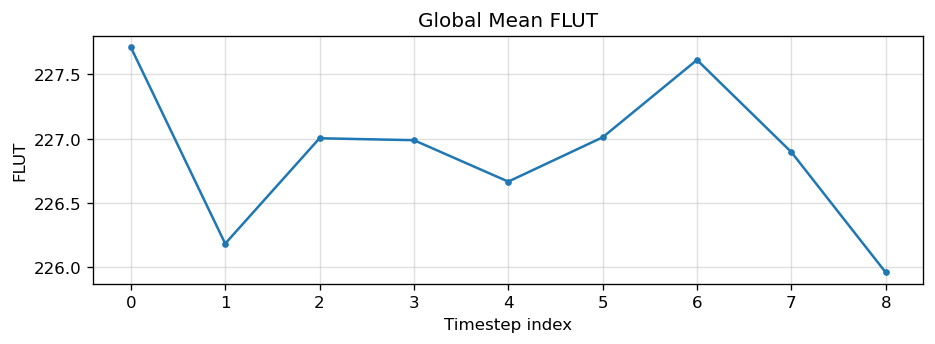

FLUT | FLUT | out | FOUND |

FLDS | FLDS | out | FOUND |

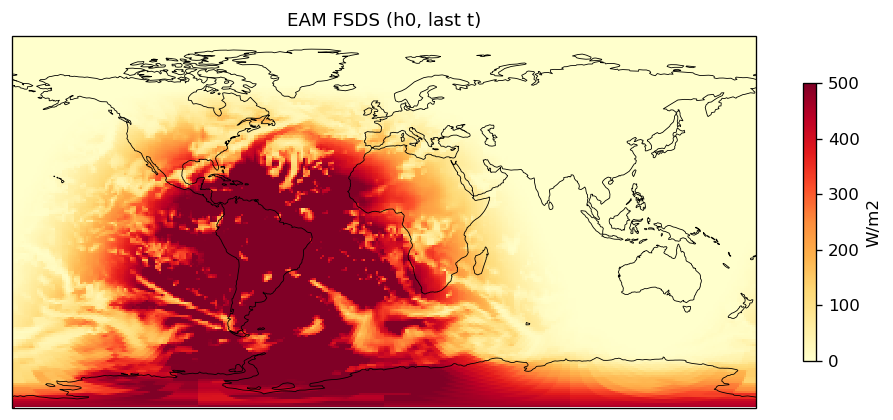

FSDS | FSDS | out | FOUND |

surface_upward_shortwave_flux | FSUS | out | FOUND |

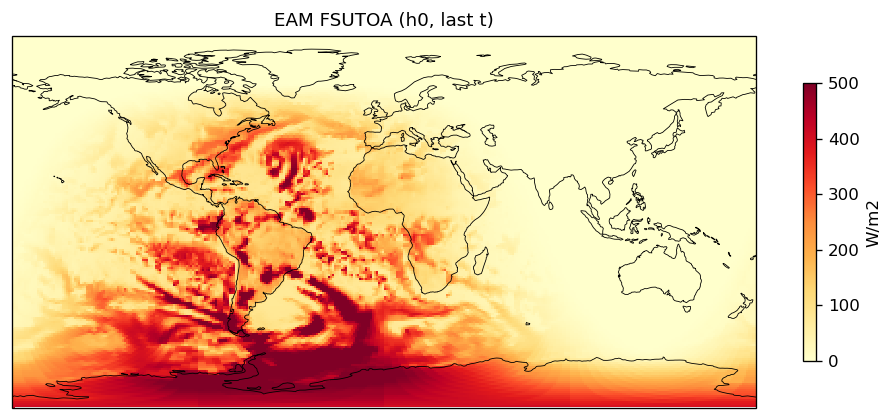

top_of_atmos_upward_shortwave_flux | FSUTOA | out | FOUND |

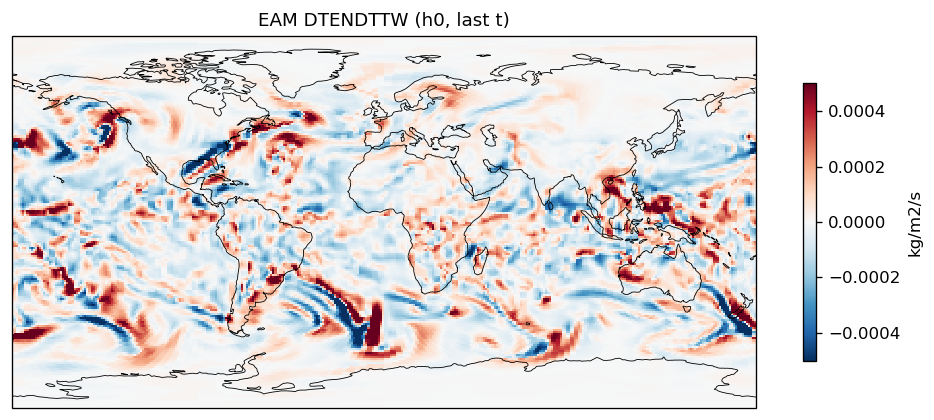

tendency_of_total_water_path_due_to_advection | DTENDTTW | out | FOUND |

TAUX | TAUX | out | FOUND |

TAUY | TAUY | out | FOUND |

File Inventory

| Category | File Count |

|---|---|

| EAM h0 | 3 |

Fill / NaN Report

Variable-level fill fraction details

| Component | Variable | Total Cells | Valid | Fill/NaN | Fill % |

|---|---|---|---|---|---|

| EAM | h0/PHIS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/PS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/TS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/LHFLX | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/SHFLX | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FLDS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FSDS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FSNTOA | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FSUTOA | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FLUT | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/SOLIN | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FSUS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/FLUS | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/ICEFRAC | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/LANDFRAC | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/OCNFRAC | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/TAUX | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/TAUY | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/PRECT | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/DTENDTTW | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/T_0 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/T_1 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/T_2 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/T_3 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/T_4 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/T_5 | 259,200 | 258,765 | 435 | 0.2% |

| EAM | h0/T_6 | 259,200 | 242,122 | 17,078 | 6.6% |

| EAM | h0/T_7 | 259,200 | 228,443 | 30,757 | 11.9% |

| EAM | h0/STW_0 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/STW_1 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/STW_2 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/STW_3 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/STW_4 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/STW_5 | 259,200 | 258,765 | 435 | 0.2% |

| EAM | h0/STW_6 | 259,200 | 242,122 | 17,078 | 6.6% |

| EAM | h0/STW_7 | 259,200 | 228,443 | 30,757 | 11.9% |

| EAM | h0/U_0 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/U_1 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/U_2 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/U_3 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/U_4 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/U_5 | 259,200 | 258,765 | 435 | 0.2% |

| EAM | h0/U_6 | 259,200 | 242,122 | 17,078 | 6.6% |

| EAM | h0/U_7 | 259,200 | 228,443 | 30,757 | 11.9% |

| EAM | h0/V_0 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/V_1 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/V_2 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/V_3 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/V_4 | 259,200 | 259,200 | 0 | 0.0% |

| EAM | h0/V_5 | 259,200 | 258,765 | 435 | 0.2% |

| EAM | h0/V_6 | 259,200 | 242,122 | 17,078 | 6.6% |

| EAM | h0/V_7 | 259,200 | 228,443 | 30,757 | 11.9% |

Cross-Verification: FME vs Legacy

Both cases run identical physics from the same ICs. FME diagnostics are output-only and do not modify model state. 22 pass, 6 fail, 1 info

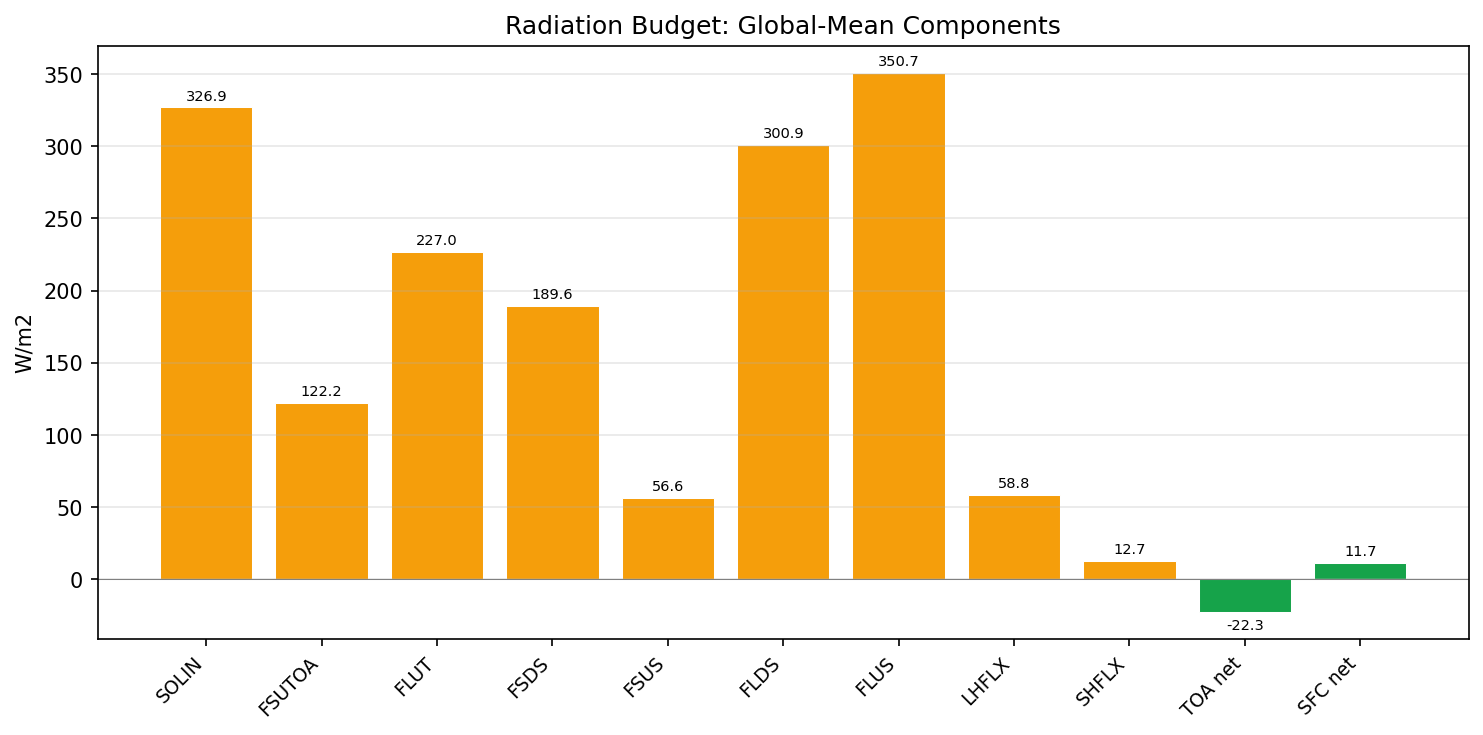

Area-Weighted Global-Mean Comparison (cos-lat weighted, <2% tolerance) (18/22)

| Field | Status | Detail |

|---|---|---|

| PS | PASS | fme=98541.6, leg=98604.1, rel=6.34e-04 |

| TS | PASS | fme=286.599, leg=286.705, rel=3.71e-04 |

| PHIS | PASS | fme=2269.56, leg=2233.85, rel=1.60e-02 |

| LANDFRAC | PASS | fme=0.293414, leg=0.292714, rel=2.39e-03 |

| OCNFRAC | PASS | fme=0.649502, leg=0.655231, rel=8.74e-03 |

| ICEFRAC | DIFF | fme=0.0570837, leg=0.052055, rel=9.66e-02 |

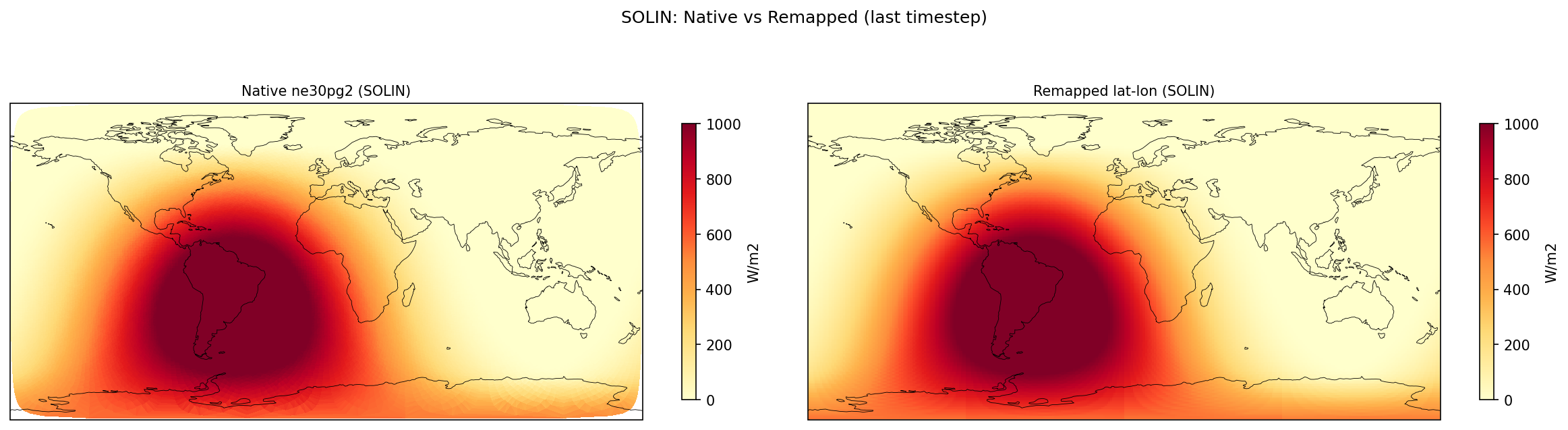

| SOLIN | PASS | fme=351.805, leg=351.571, rel=6.68e-04 |

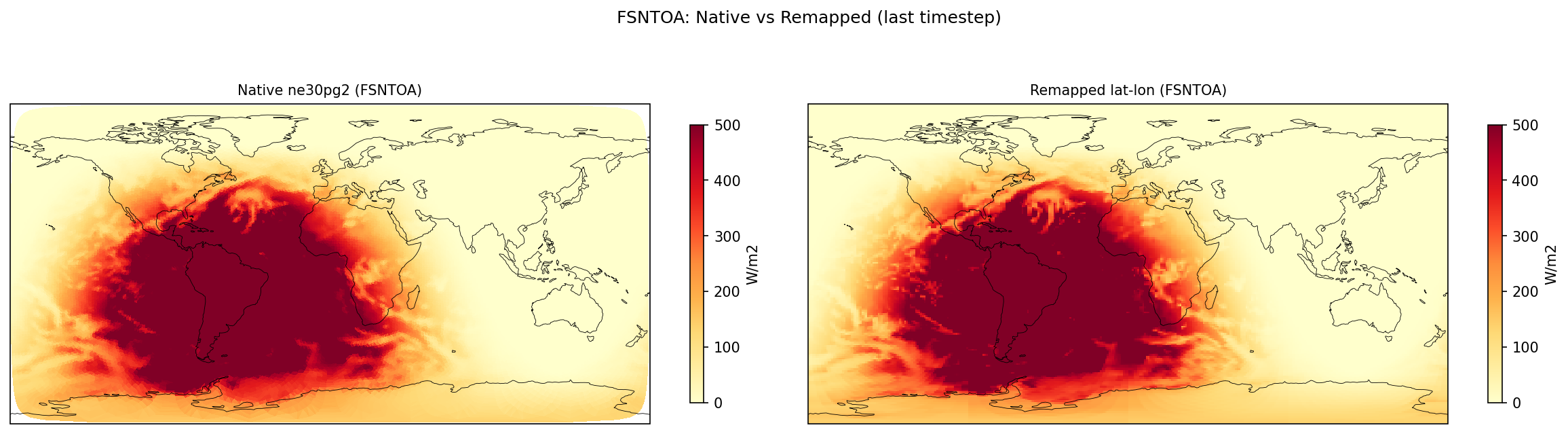

| FSNTOA | PASS | fme=246.991, leg=247.372, rel=1.54e-03 |

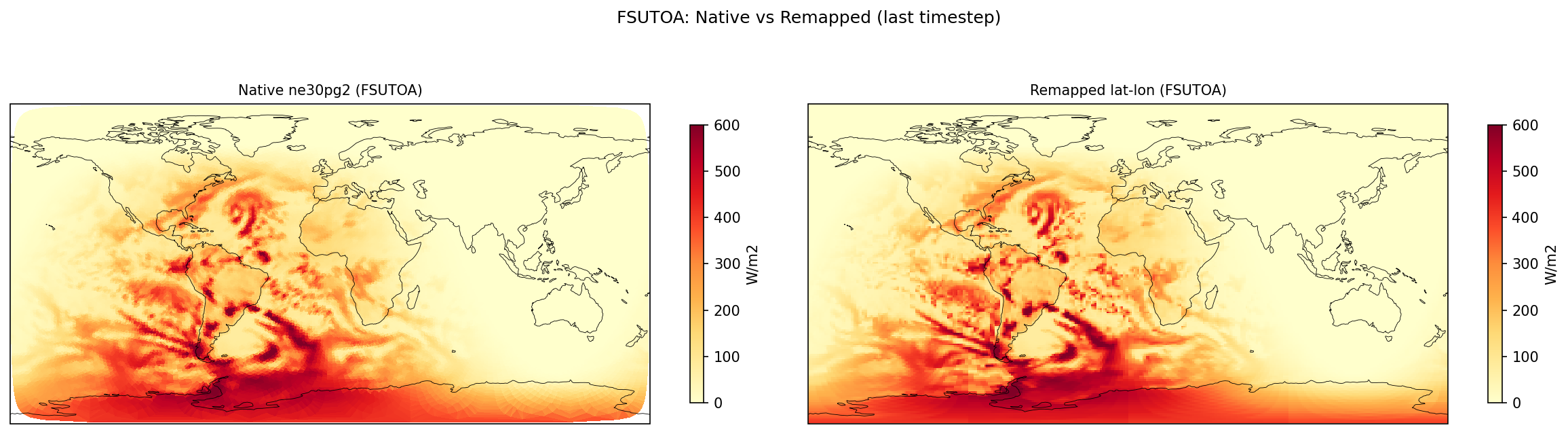

| FSUTOA | PASS | fme=104.815, leg=104.199, rel=5.91e-03 |

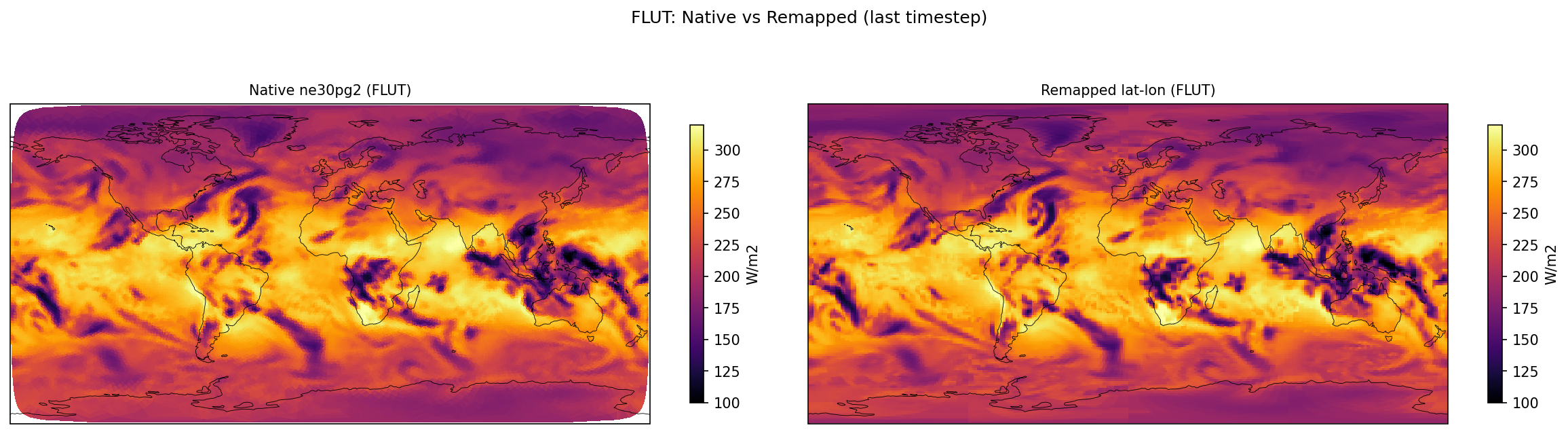

| FLUT | PASS | fme=239.598, leg=239.47, rel=5.37e-04 |

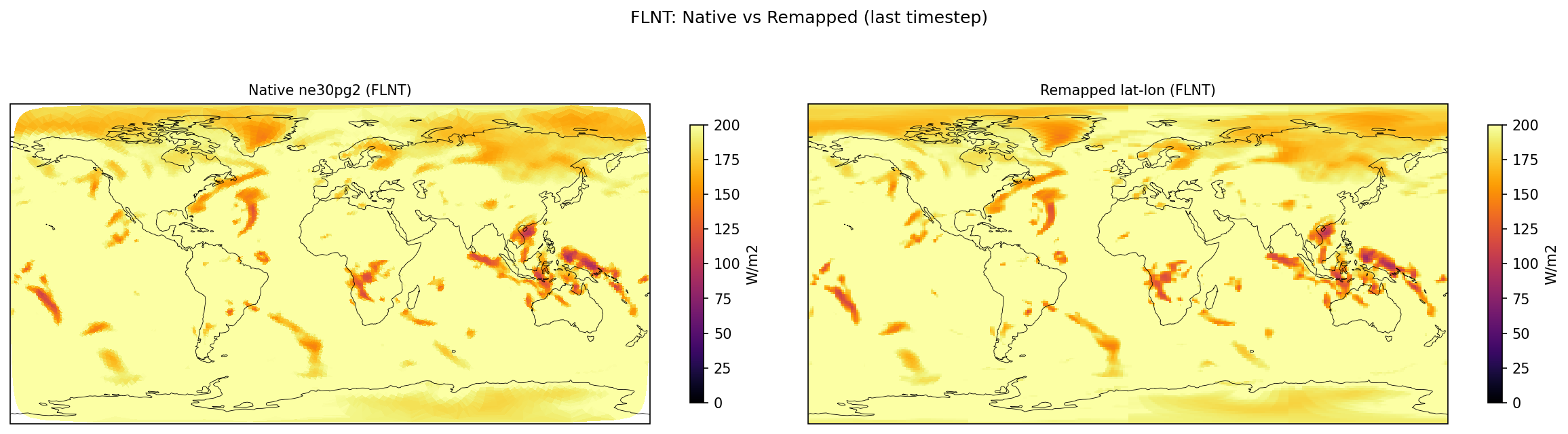

| FLNT | PASS | fme=239.4, leg=239.272, rel=5.36e-04 |

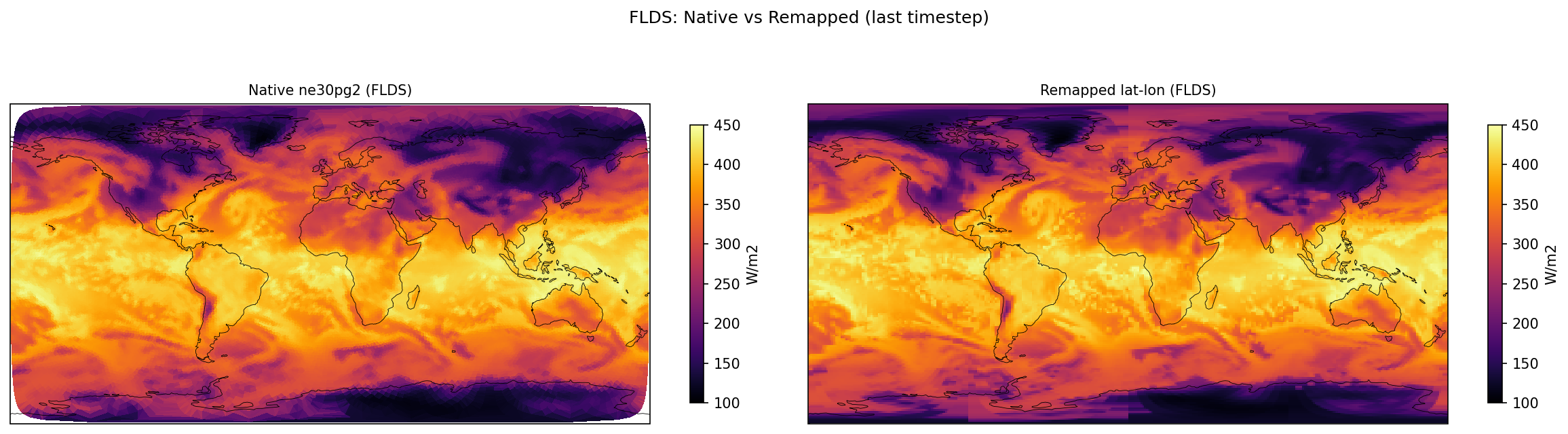

| FLDS | PASS | fme=337.223, leg=337.358, rel=4.01e-04 |

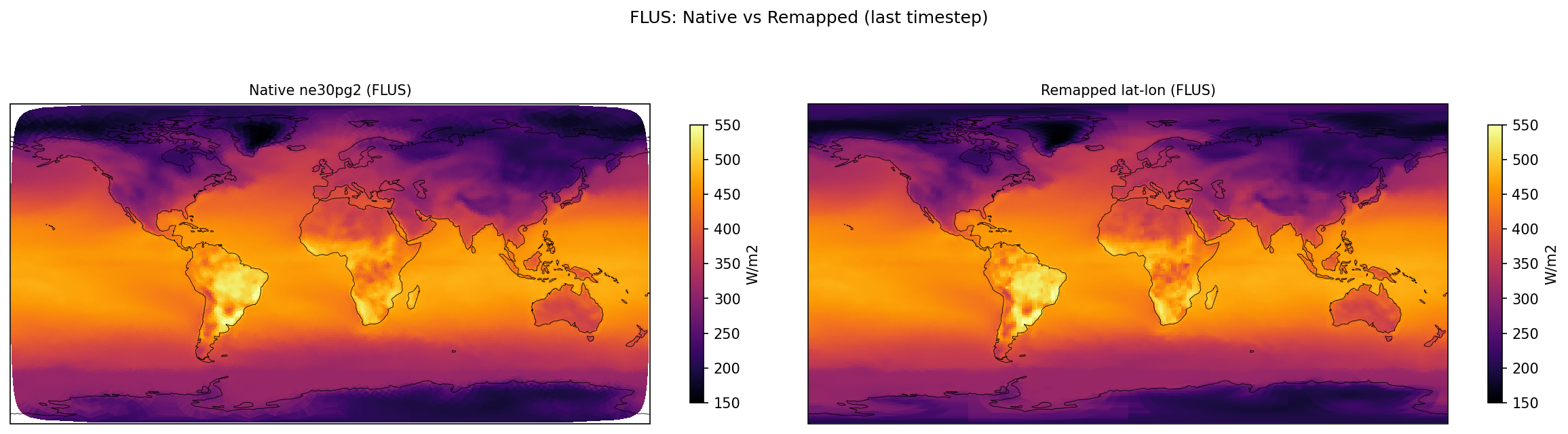

| FLUS | PASS | fme=391.271, leg=391.478, rel=5.29e-04 |

| FSDS | PASS | fme=197.113, leg=196.032, rel=5.52e-03 |

| FSUS | DIFF | fme=29.8047, leg=28.2625, rel=5.46e-02 |

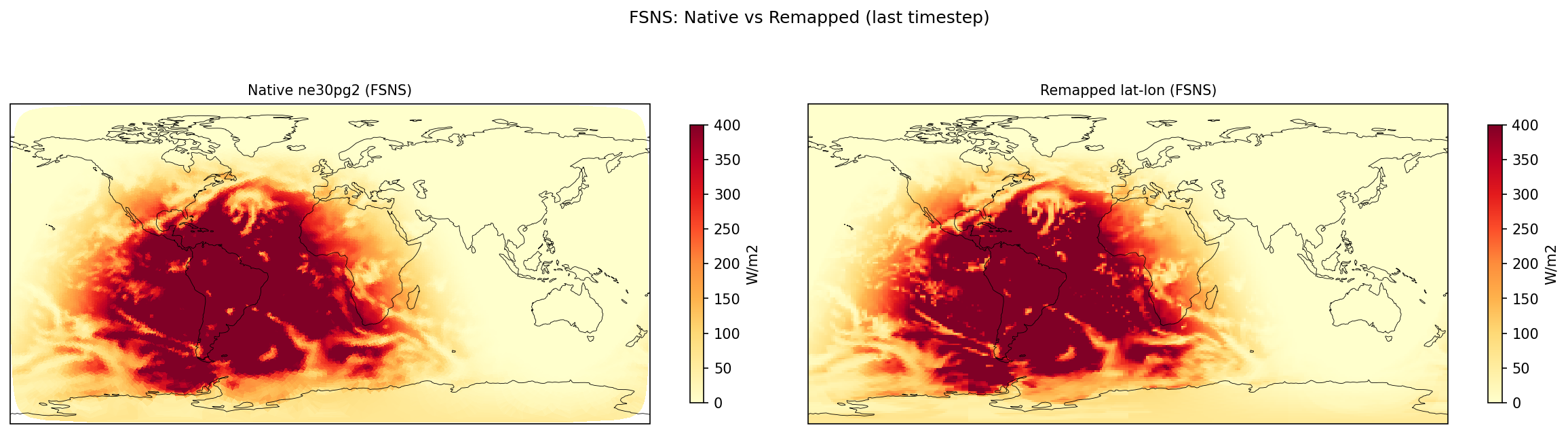

| FSNS | PASS | fme=167.309, leg=167.769, rel=2.74e-03 |

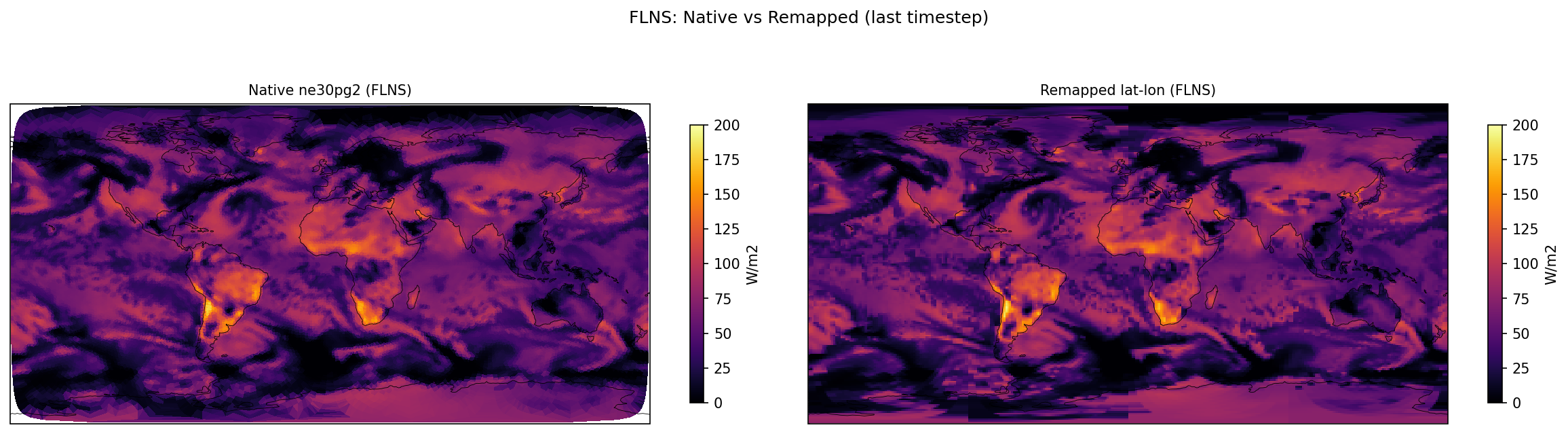

| FLNS | PASS | fme=54.0483, leg=54.1202, rel=1.33e-03 |

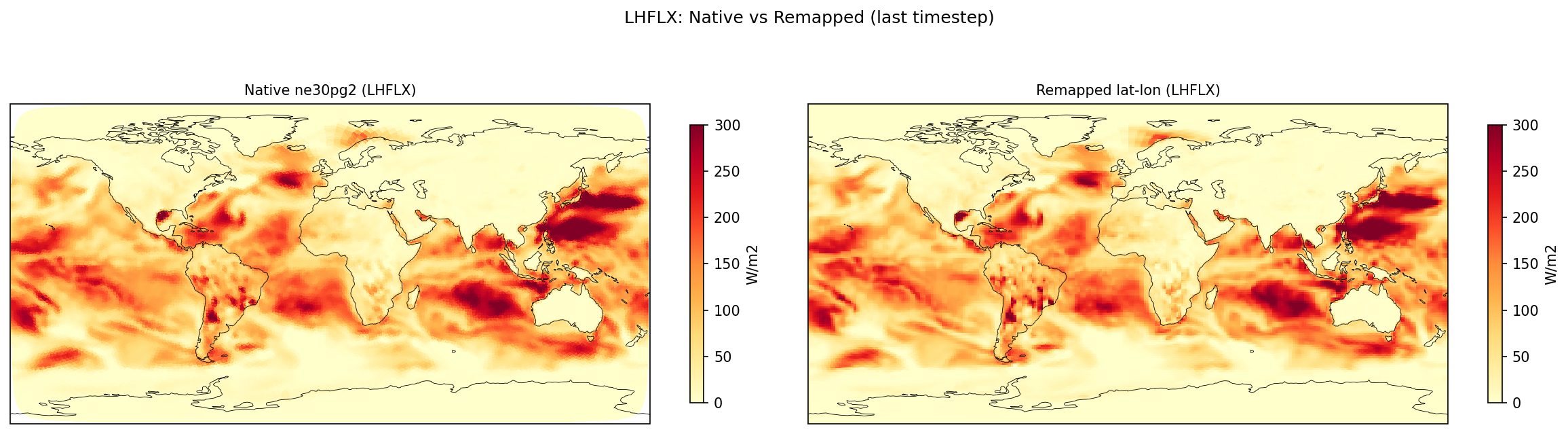

| LHFLX | PASS | fme=80.3688, leg=80.2388, rel=1.62e-03 |

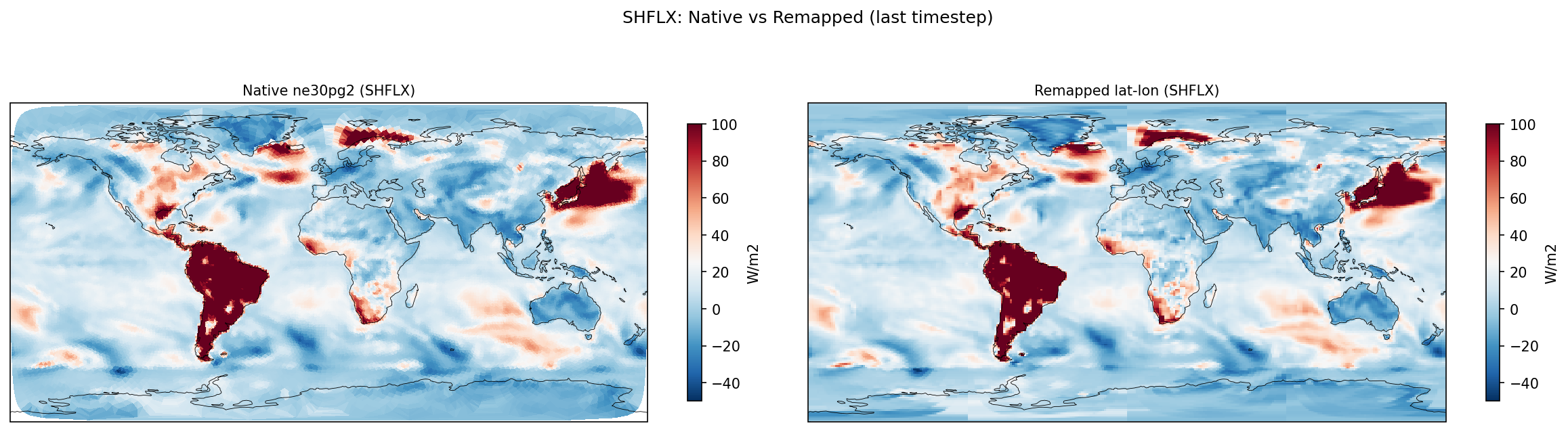

| SHFLX | PASS | fme=17.4038, leg=17.6629, rel=1.47e-02 |

| TAUX | DIFF | fme=-0.004732, leg=-0.00750318, rel=3.69e-01 |

| TAUY | DIFF | fme=0.00832446, leg=0.00850632, rel=2.14e-02 |

| PRECT | PASS | fme=3.17636e-08, leg=3.21008e-08, rel=1.05e-02 |

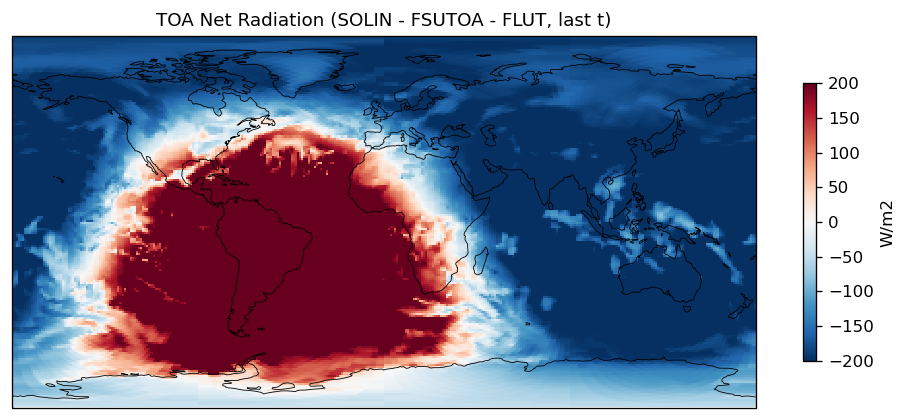

Diagnostic Figures

EAM

Comparisons

Cross-Verify

Performance Timing

| ***** | GLOBAL | STATISTICS | ( | 1024 | MPI | TASKS) | ***** | ||||||

| name | on | processes | threads | count | walltotal | wallmax | (proc | thrd | ) | wallmin | (proc | thrd | ) |

| "CPL:INIT" | - | 1024 | 1024 | 1.024000e+03 | 7.488779e+04 | 76.070 | ( | 610 | 0) | 69.766 | ( | 983 | 0) |

| "CPL:cime_pre_init1" | - | 1024 | 1024 | 1.024000e+03 | 4.429327e+03 | 7.263 | ( | 610 | 0) | 0.959 | ( | 983 | 0) |

| "CPL:mpi_init" | - | 1024 | 1024 | 1.024000e+03 | 4.310037e+03 | 7.147 | ( | 553 | 0) | 0.843 | ( | 983 | 0) |

| "CPL:ESMF_Initialize" | - | 1024 | 1024 | 1.024000e+03 | 2.513084e+00 | 0.004 | ( | 953 | 0) | 0.000 | ( | 688 | 0) |

| "CPL:cime_pre_init2" | - | 1024 | 1024 | 1.024000e+03 | 4.093555e+02 | 0.403 | ( | 374 | 0) | 0.396 | ( | 131 | 0) |

| "CPL:seq_infodata_init" | - | 1024 | 1024 | 1.024000e+03 | 6.452165e+01 | 0.133 | ( | 379 | 0) | 0.002 | ( | 746 | 0) |

| "CPL:seq_timemgr_clockInit" | - | 1024 | 1024 | 1.024000e+03 | 4.781367e+00 | 0.008 | ( | 135 | 0) | 0.001 | ( | 897 | 0) |

| "CPL:cime_init" | - | 1024 | 1024 | 1.024000e+03 | 7.004605e+04 | 68.410 | ( | 0 | 0) | 68.402 | ( | 406 | 0) |

| "CPL:init_comps" | - | 1024 | 1024 | 1.024000e+03 | 5.926836e+04 | 57.887 | ( | 121 | 0) | 57.875 | ( | 168 | 0) |

| "CPL:comp_init_pre_all" | - | 1024 | 1024 | 1.024000e+03 | 2.891074e-01 | 0.002 | ( | 871 | 0) | 0.000 | ( | 939 | 0) |

| "comp_init_cc_iac" | - | 1024 | 1024 | 1.024000e+03 | 2.396527e+00 | 0.006 | ( | 742 | 0) | 0.000 | ( | 501 | 0) |

| "z_i:comp_init" | - | 1024 | 1024 | 1.024000e+03 | 8.589628e-01 | 0.002 | ( | 701 | 0) | 0.000 | ( | 87 | 0) |

| "CPL:comp_init_cc_atm" | - | 1024 | 1024 | 1.024000e+03 | 3.033496e+04 | 29.628 | ( | 1 | 0) | 29.623 | ( | 815 | 0) |

| "a_i:comp_init" | - | 1024 | 1024 | 2.048000e+03 | 3.517122e+04 | 34.483 | ( | 25 | 0) | 34.246 | ( | 137 | 0) |

| "a_i:cam_init" | - | 1024 | 1024 | 1.024000e+03 | 3.007682e+04 | 29.374 | ( | 937 | 0) | 29.369 | ( | 293 | 0) |

| "a_i:cam_initfiles_open" | - | 1024 | 1024 | 1.024000e+03 | 3.328119e+02 | 0.327 | ( | 947 | 0) | 0.322 | ( | 256 | 0) |

| "a_i:cam_initial" | - | 1024 | 1024 | 1.024000e+03 | 6.756930e+03 | 6.600 | ( | 1 | 0) | 6.597 | ( | 916 | 0) |

| "a_i:dyn_init1" | - | 1024 | 1024 | 1.024000e+03 | 6.018509e+02 | 1.653 | ( | 296 | 0) | 0.176 | ( | 542 | 0) |

| "a_i:hycoef_init" | - | 1024 | 1024 | 1.024000e+03 | 6.547680e+01 | 0.074 | ( | 833 | 0) | 0.046 | ( | 32 | 0) |

| "a_i:prim_init1" | - | 1024 | 1024 | 1.024000e+03 | 7.456527e+01 | 0.091 | ( | 56 | 0) | 0.058 | ( | 787 | 0) |

| "a_i:repro_sum_finalsum" | - | 1024 | 1024 | 9.011200e+04 | 4.052332e-02 | 0.000 | ( | 132 | 0) | 0.000 | ( | 655 | 0) |

| "a_i:compose_init" | - | 1024 | 1024 | 1.024000e+03 | 2.088705e+01 | 0.028 | ( | 278 | 0) | 0.015 | ( | 128 | 0) |

| "a_i:sl_init1" | - | 1024 | 1024 | 1.024000e+03 | 5.235690e+00 | 0.011 | ( | 127 | 0) | 0.001 | ( | 215 | 0) |

| "a_i:phys_grid_init" | - | 1024 | 1024 | 1.024000e+03 | 1.114781e+03 | 1.501 | ( | 608 | 0) | 0.024 | ( | 269 | 0) |

| "a_i:initial_conds" | - | 1024 | 1024 | 1.024000e+03 | 4.970694e+03 | 4.871 | ( | 270 | 0) | 4.843 | ( | 813 | 0) |

| "a_i:PIO:initdecomp" | - | 1024 | 1024 | 6.144000e+03 | 4.233158e+01 | 0.059 | ( | 0 | 0) | 0.035 | ( | 937 | 0) |

| "a_i:PIO:PIOc_initdecomp" | - | 1024 | 1024 | 6.144000e+03 | 4.149433e+01 | 0.058 | ( | 0 | 0) | 0.035 | ( | 937 | 0) |

| "a_i:dyn_init2" | - | 1024 | 1024 | 1.024000e+03 | 5.604318e+01 | 0.063 | ( | 585 | 0) | 0.048 | ( | 238 | 0) |

Reproducibility

- Command

/eagle/E3SMinput/mahf708/scratch/crux/mahf708-e3sm/cime_config/testmods_dirs/allactive/fme_output/verify_eam.py --rundir /eagle/E3SMinput/mahf708/scratch/crux/SMS_Ld2.ne30pg2_r05_IcoswISC30E3r5.WCYCL1850.crux_gnu.allactive-fme_output.20260415_162604_ycj7vh/run --legacy-rundir /eagle/E3SMinput/mahf708/scratch/crux/SMS_Ld2.ne30pg2_r05_IcoswISC30E3r5.WCYCL1850.crux_gnu.allactive-fme_legacy_output.20260415_162554_q16mxg/run --outdir /eagle/E3SMinput/mahf708/scratch/crux/share/fme_output_eam -v- Script

/eagle/E3SMinput/mahf708/scratch/crux/mahf708-e3sm/cime_config/testmods_dirs/allactive/fme_output/verify_eam.py— git:1ecfbf15b7 (dirty)- Run directory

/eagle/E3SMinput/mahf708/scratch/crux/SMS_Ld2.ne30pg2_r05_IcoswISC30E3r5.WCYCL1850.crux_gnu.allactive-fme_output.20260415_162604_ycj7vh/run- Legacy run directory

/eagle/E3SMinput/mahf708/scratch/crux/SMS_Ld2.ne30pg2_r05_IcoswISC30E3r5.WCYCL1850.crux_gnu.allactive-fme_legacy_output.20260415_162554_q16mxg/run- Host

mahf708@x1000c6s1b0n0- Generated

- 2026-04-15 19:09:12

user_nl_eam (FME)

! FME (Full Model Emulation) online output processing -- EAM

!

! Production configuration for ACE/Samudra training data generation.

!

! Vertical coarsening uses EAM L80 interface pressures matching the ACE

! offline coarsening indices [0,25,38,46,52,56,61,69,80] (see:

! github.com/ai2cm/ace/blob/exp/e3sm/scripts/data_process/configs/

! e3smv3-coupled-atm-1deg.yaml).

! Derived from hyai/hybi in eami_mam4_Linoz_ne30np4_L80_c20231010.nc.

empty_htapes = .true.

avgflag_pertape = 'I'

nhtfrq = -6

mfilt = 4

! Derived fields (computed at full resolution before vcoarsen)

! STW -> specific_total_water in ACE naming

derived_fld_defs = 'STW=Q+CLDICE+CLDLIQ+RAINQM'

! Vertical coarsening: 8 pressure layers matching ACE L80 coarsening.

! Interface pressures (Pa) from L80 hyai/hybi at indices [0,25,38,46,52,56,61,69,80]:

! 0: 10.0 Pa, 25: 4803.8 Pa, 38: 13913.1 Pa, 46: 26856.3 Pa,

! 52: 43998.3 Pa, 56: 59659.7 Pa, 61: 76854.2 Pa, 69: 90711.8 Pa, 80: 101325.0 Pa

vcoarsen_pbounds = 10.0, 4803.81, 13913.06, 26856.34,

43998.31, 59659.67, 76854.15, 90711.83, 101325.0

vcoarsen_avg_flds = 'T','U','V','STW'

! Tape 1: 6-hourly output.

! State variables are instantaneous; fluxes/tendencies use :A (time-averaged).

fincl1 = 'SOLIN:A', 'PHIS',

'LANDFRAC', 'OCNFRAC', 'ICEFRAC',

'PS', 'TS',

'T_0','T_1','T_2','T_3','T_4','T_5','T_6','T_7',

'STW_0', 'STW_1','STW_2','STW_3','STW_4','STW_5','STW_6','STW_7',

'U_0','U_1','U_2','U_3','U_4','U_5','U_6','U_7',

'V_0','V_1','V_2','V_3','V_4','V_5','V_6','V_7',

'FLUT:A', 'FLNT:A', 'FSNTOA:A', 'FSUTOA:A',

'FLDS:A', 'FLUS:A', 'FSDS:A', 'FSUS:A', 'FSNS:A', 'FLNS:A',

'LHFLX:A','SHFLX:A',

'TAUX:A', 'TAUY:A',

'PRECT:A',

'DTENDTTW'

horiz_remap_file(1) = '/eagle/E3SMinput/mahf708/fme_maps/map_ne30pg2_to_gaussian_180by360_shifted.nc'

user_nl_eam (Legacy)

cosp_lite = .true.

empty_htapes = .true.

avgflag_pertape = 'I'

nhtfrq = -6

mfilt = 4

fincl1 = 'T','Q','U','V','PS','PHIS',

'CLDLIQ','CLDICE','RAINQM',

'TMQ','TGCLDLWP','TGCLDIWP',

'TEFIX','TS','QREFHT','U10','TREFHT',

'PSL','LANDFRAC','OCNFRAC','ICEFRAC',

'FSDS:A','FLDS:A','FSNS:A','FLNS:A','SOLIN:A',

'FSNTOA:A','FSUTOA:A','FLUT:A','FLNT:A',

'FSUS:A','FLUS:A',

'LHFLX:A','QFLX:A',

'SHFLX:A','PRECT:A','PRECC:A','PRECSL:A','PRECSC:A','TAUX:A','TAUY:A'

verify_eam.py (3064 lines)